Low-latency conference streaming to very large audiences

Jitsi today supports life-streaming conferences to large audiences through our Jibri tool – this tool renders all the media from the conference, and forwards it to a streaming service such as YouTube.

This approach works, but it has limitations. In addition to being computationally expensive, it also introduces substantial latency to the media. This can be a problem when interaction is needed between the participants in the conference and the audience, for example for a text-based question-and-answer session.

This article will describe a new approach to live-streaming media, which uses Jitsi’s builtin functionality, without transcoding, to reach potentially very large audiences with latency comparable to that of a live conference.

Overview

The basic approach to media distribution for this solution is straightforward – simply forward media to all the audience members in the same way that they are forwarded today to conference participants – i.e. as individual RTP streams over WebRTC. This can re-use Jitsi’s existing well-tested technology to distribute the media and have it arrive at receivers and be played out to viewers.

The challenge, of course, is to scale Jitsi’s back-end services so they can support sending media to very large numbers of viewers, potentially in the hundreds of thousands or more. The rest of this article will discuss some of the architectural enhancements we need to make to Jitsi to support this.

The first insight that will make this possible is to realize that in a streaming scenario, while the conference’s active participants need to know that they are being watched by an audience, they don’t need to know all the audience members’ identities or presence in real-time; nor do the audience members need to know about each other. Thus, the system can be modified such that presence information about individual audience members is not sent to other conference participants, or to unnecessary parts of the backend; this reduces the amount of signaling traffic substantially.

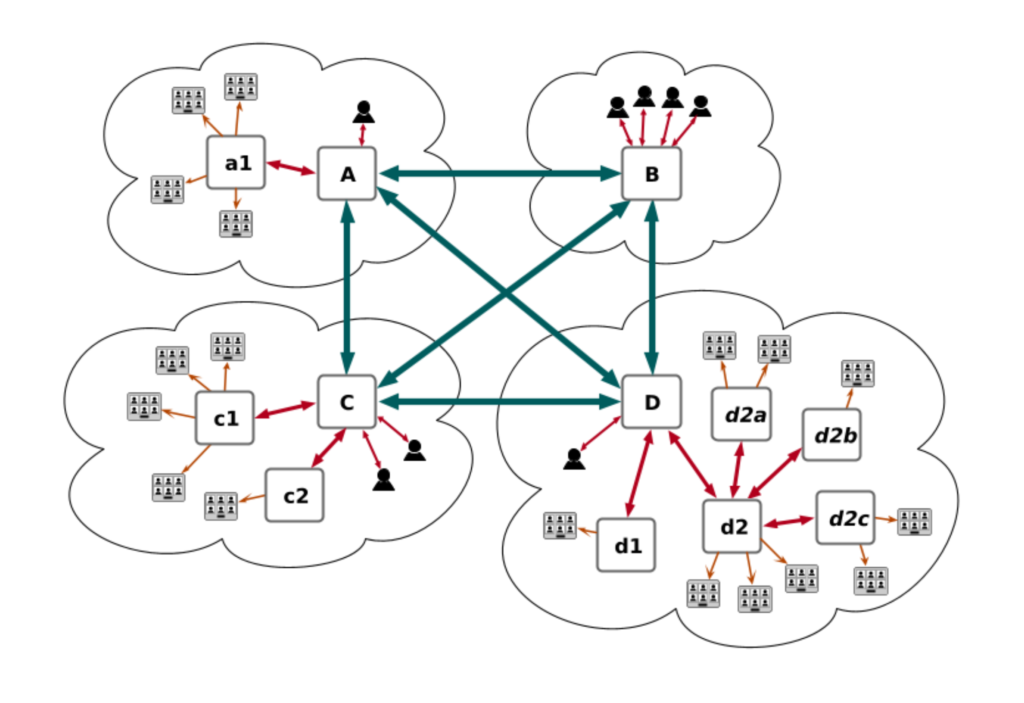

The second substantial change that we are making to the backend is to be able to have more sophisticated topologies for the Jitsi Videobridges to relay media among them. Currently, when more than one Jitsi Videobridge is used in a conference (in Jitsi’s Octo/Relay technology), the bridges are connected to each other in a full mesh. This topology minimizes the latency for media, but would not scale to very large conferences, where e.g. hundreds of thousands of participants might need several hundred bridges. If every bridge in such a conference were connected to every other one, the bridges could be overloaded just sending media out.

Instead, we are developing technology that can arrange bridges into more elaborate topologies. In particular, our plan for very large conferences is to still have the conference’s active participants be connected to bridges which are arranged in a mesh; but the audience members would then be connected to bridges whose interconnection forms a tree extending from various nodes of the core mesh, so that the core media servers would only need to send media out to a limited number of connections to the audience’s bridges, which would then be forwarded out to the audience, possibly relaying through multiple bridges on the way.

Finally, changes need to be made for the signaling servers used by the Jitsi back-end. While information about audience members only needs to be propagated to selected back-end infrastructure servers, information about a conference’s active participants needs to be forwarded to the entire audience. The existing XMPP servers that the Jitsi back-end uses aren’t designed for this level of load. Thus, we are developing solutions such that this participant information can be mirrored from one XMPP server to another, allowing each server to handle only a manageable number of client connections while still getting the information to the entire audience quickly.

Stay tuned!

❤️ Your personal meetings team.

Author: Jonathan Lennox